Unlock crucial business data by mastering website anti-scraping. Our 2026 guide covers proven strategies from IP rotation to headless browsers...

The internet holds your next competitive advantage — competitor pricing, market signals, lead intelligence, and industry trends — updated in real time, every day. The challenge isn’t that this data exists. The challenge is capturing it at scale, with precision, speed, and full regulatory compliance. That’s exactly where Hir Infotech leads.

With 13+ years of proven expertise, 2,745+ satisfied clients across the USA, Europe, and Australia, Hir Infotech delivers enterprise-grade, AI-driven web scraping services that extract, structure, and deliver the data your business needs to move faster and decide smarter. Trusted by B2B decision-makers from mid-market companies to global enterprises, we are your strategic partner for scalable, compliant, and intelligent web data extraction.

2.5B+

Data Records Delivered

99.4%+

Extraction Accuracy

2,745+

Happy Clients

13+

Years of Expertise

25+

Industries Served

In 2026, data velocity separates market leaders from followers. Businesses that wait for monthly reports lose ground to competitors who act on real-time intelligence gathered hourly. AI-driven web scraping is the systematic, automated collection of structured data from public web sources — powered by machine learning models that understand dynamic page structures, bypass anti-bot mechanisms, adapt to site changes autonomously, and deliver clean, analysis-ready datasets at enterprise scale. For B2B companies in the USA, UK, Germany, France, Netherlands, Sweden, Austria, Switzerland, Denmark, Spain, Italy, and Australia, the competitive case for AI web scraping has never been stronger. Organizations implementing AI-driven extraction and acting on insights within hours outperform competitors by up to 47%, generating measurably more value per data deployment. Hir Infotech's AI-driven web scraping infrastructure processes millions of data points daily, delivering structured outputs via API, JSON, CSV, or direct database integration.

Hir Infotech serves clients across Europe and the USA with dedicated regional compliance frameworks, ensuring that enterprises in Germany, France, Sweden, and the Netherlands receive scraping solutions designed around EU data governance standards from day one.

Hir Infotech’s AI scraping infrastructure combines custom crawlers, rotating proxy networks, NLP-enriched parsing, and adaptive machine learning models to deliver structured data at any volume — reliably, repeatably, and compliantly.

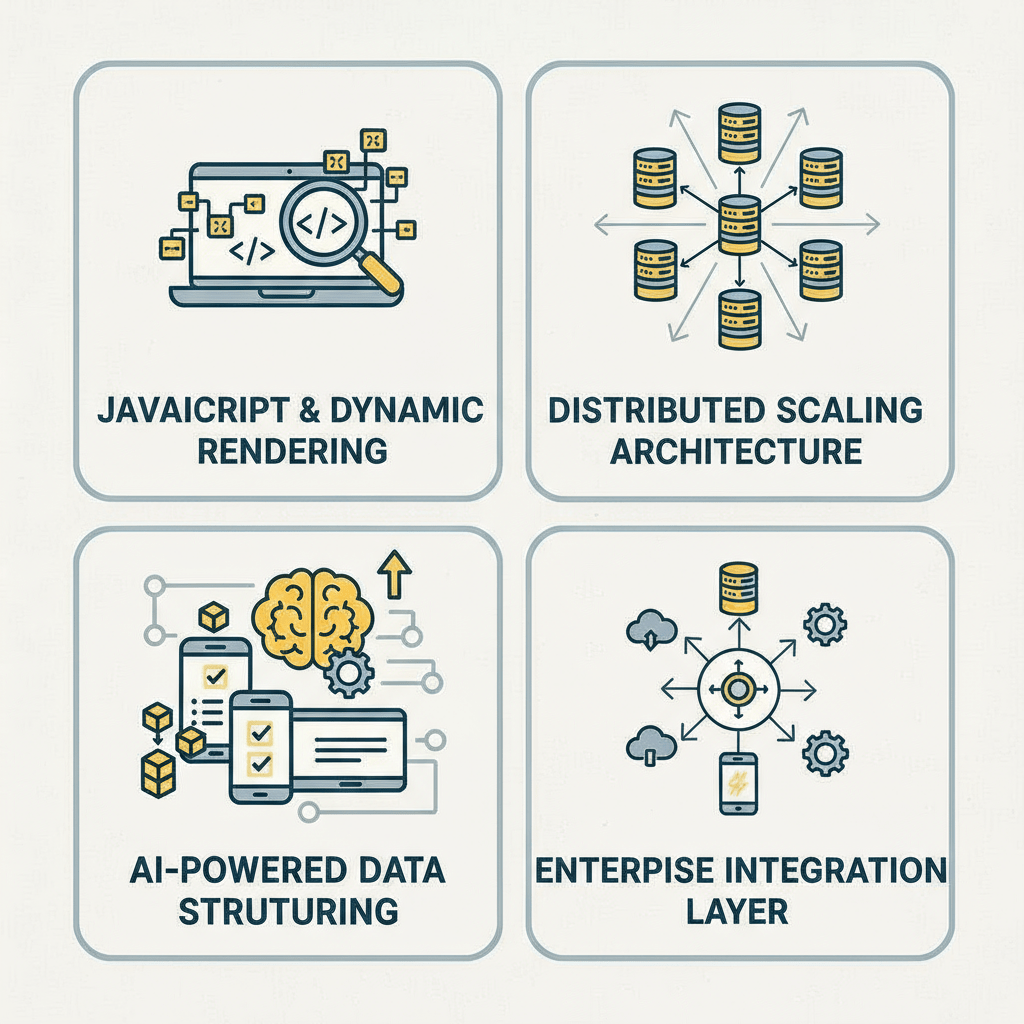

Extracts data from React, Angular, and Vue.js-driven websites that traditional scrapers cannot parse — critical for SaaS platforms, e-commerce storefronts, and financial portals where live content is loaded client-side. Hir Infotech’s headless browser infrastructure handles any dynamic site architecture.

Raw scraped content is automatically enriched using natural language processing — categorizing, tagging, deduplicating, and normalizing data before delivery. This means your team receives insight-ready datasets, not raw HTML dumps requiring manual cleanup.

Enterprise-grade IP rotation, CAPTCHA resolution, and user-agent management ensure continuous, uninterrupted extraction from high-protection websites. Our proprietary anti-detection layer maintains 99.4%+ data continuity across long-running enterprise projects.

Configure recurring crawls (hourly, daily, weekly) or event-triggered extraction pipelines that activate when specific market conditions, price thresholds, or content changes occur — enabling proactive, data-driven decision-making at enterprise speed.

Hir Infotech scrapes product listings, pricing tiers, promotional offers, and stock availability from major e-commerce platforms including Amazon, Shopify stores, Walmart Marketplace, and B2B wholesale portals. Updated pricing intelligence helps procurement, revenue management, and sales teams stay competitive in real time across USA, UK, and European markets.

We extract structured business data from directories such as Yelp (USA), Yell (UK), Kompass (Europe), and TrueLocal (Australia) — including company names, decision-maker contacts, industry categories, and geographic data. Ideal for sales prospecting and CRM enrichment teams needing verified, GDPR-compliant lead data.

Our scrapers continuously harvest listing data from Zillow (USA), Rightmove (UK), Immobilienscout24 (Germany), and Domain.com.au (Australia) — capturing property specs, pricing history, and market velocity. Real estate technology companies and investment firms use this data for AVM models, portfolio analytics, and deal sourcing automation.

Hir Infotech extracts structured financial data from public filings, news wires, earnings portals, and regulatory databases — including SEC filings (USA), Companies House (UK), and Bundesanzeiger (Germany). Asset managers and fintech firms use these feeds to power quantitative models, ESG scoring, and portfolio risk monitoring.

We harvest job postings from Indeed (Global), LinkedIn (Global), Stepstone (Germany), and Seek (Australia) to map hiring trends, skill demand signals, compensation benchmarks, and competitor talent acquisition patterns — giving HR, workforce planning, and market research teams continuous labor market intelligence.

Hir Infotech aggregates data from ClinicalTrials.gov (USA), EMA (Europe), and TGA (Australia), plus pharma pricing databases and formulary platforms. Life sciences companies and healthcare analytics firms use this to monitor pipeline activity, pricing trends, and regulatory changes across multiple jurisdictions simultaneously.

FinTech and financial services firms in the UK, Switzerland, and the Netherlands demand the highest data accuracy and regulatory compliance. Hir Infotech appends verified decision-maker contacts, FCA-registered entity details, AUM data, and role-specific email addresses to financial services prospect lists — all delivered with GDPR compliance documentation and full data lineage traceability. (UK / Europe)

We build custom news aggregation pipelines pulling from thousands of RSS feeds, news portals, trade publications, and social listening sources across English, German, French, Spanish, Italian, and Dutch. Marketing, PR, and strategy teams receive curated, tagged intelligence feeds for brand monitoring, crisis detection, and competitive narrative tracking.

Hir Infotech automates extraction from government procurement portals (SAM.gov USA, TED EU, AusTender Australia), regulatory agency publications, and public company registries. Legal, compliance, and business development teams use this data for tender monitoring, regulatory impact assessment, and public sector opportunity identification.

The Strategic Case for Outsourced AI Web Scraping

Building and maintaining an internal web scraping infrastructure is expensive, technically complex, and increasingly difficult to keep compliant. Anti-bot technologies on major platforms grow more sophisticated monthly. GDPR enforcement in Europe continues to intensify, with regulators in Germany, France, and the Netherlands issuing record fines for mishandled data collection. CCPA interpretations in California have broadened significantly in 2025-2026. For B2B organizations that need reliable, structured web data without the engineering overhead, Hir Infotech’s managed AI scraping service eliminates the build-vs-buy risk entirely.

Our enterprise clients — including e-commerce platforms in the USA, financial services firms in the UK and Germany, and SaaS companies scaling across the EU — consistently achieve ROI within the first 90 days of deployment. By replacing manual research processes with automated data pipelines, teams redirect hundreds of analyst-hours per month toward higher-value strategy work. With 2,745+ happy clients served over 13+ years, Hir Infotech has the production-proven track record that enterprise procurement teams demand before onboarding a mission-critical data partner.

Why Compliance-First Scraping Is a Competitive Differentiator in Europe and the USA

At Hir Infotech, compliance isn’t a checkbox — it’s an architectural principle embedded in every scraping pipeline we build. Every project begins with a domain risk assessment: we evaluate robots.txt directives, platform Terms of Service, applicable data protection regulations (GDPR, CCPA, PDPA), and the nature of the data being extracted before a single crawl is executed. This pre-project compliance review is standard practice, not an add-on, and provides our enterprise clients with the audit trail documentation required by their legal and data governance teams.

Operationally, our AI web scraping service is fully managed, meaning Hir Infotech’s engineering team monitors crawl health, adapts to site structure changes, resolves anti-bot challenges, and ensures data quality continuously — without client engineering involvement. Delivery formats include JSON, CSV, XML, and direct API integration compatible with Salesforce, HubSpot, Snowflake, BigQuery, Power BI, and custom data warehousing environments. For B2B enterprises in Denmark, Austria, Iceland, Spain, Italy, and Switzerland, we offer EU-hosted data processing options to satisfy data residency requirements specific to your jurisdiction.

Client Background: A New York-based B2B wholesale distributor operating across 12 product categories, supplying retail chains across the eastern USA. Annual GMV exceeding $340 million, with a pricing team managing 85,000+ SKUs.

Challenge: The client’s pricing team was manually checking 200+ competitor URLs daily across Amazon Business, Grainger, and regional wholesale portals — a process consuming 14 analyst-hours per day, producing data that was already 18-24 hours stale by the time it reached decision-makers. Margin erosion from reactive pricing decisions was estimated at $2.1 million annually.

Solution: Hir Infotech designed and deployed a real-time competitive pricing intelligence pipeline covering 340 competitor URLs across 6 major B2B e-commerce platforms. The AI scraping system ran on a 2-hour refresh cycle, with NLP-enriched product matching to align non-identical SKUs across platforms. Outputs were delivered via API directly into the client’s existing pricing optimization platform.

Results: Within 60 days of deployment, the pricing team reduced manual monitoring effort by 94%. Average pricing decision latency dropped from 24 hours to 2 hours. The client reported recovering $1.4 million in recovered margin within the first two quarters, with the scraping pipeline processing 1.2 million data points per week without a single data quality incident.

Client Testimonial: “Hir Infotech didn’t just give us data — they gave us the ability to move at market speed. The pipeline runs flawlessly and the compliance documentation their team provided made our legal sign-off faster than any vendor we’ve worked with.” — VP of Pricing, B2B Wholesale Distributor, New York.

Client Background: A Frankfurt-based alternative data provider supplying structured datasets to 40+ asset management and hedge fund clients across the EU. Regulated under BaFin and required to document all data sourcing for client compliance audits.

Challenge: The client needed to scale their web data acquisition from 120 monitored sources to 800+ without proportionally scaling their internal engineering team. Critically, every dataset needed full provenance documentation — source URL, extraction timestamp, lawful basis assessment, and retention policy — to satisfy fund clients’ regulatory reporting requirements under MiFID II.

Solution: Hir Infotech built a white-label data acquisition platform with full provenance logging embedded in the pipeline architecture. Each dataset delivered included a machine-readable compliance manifest detailing source, timestamp, extraction method, and regulatory assessment. The system was EU-hosted with German data residency, satisfying BaFin and GDPR requirements. 847 sources were onboarded within 45 days.

Results: The client scaled from 120 to 847 monitored sources with zero increase in internal engineering headcount. Audit response time for fund client data requests dropped from 3 days to 4 hours. The compliance manifest system was specifically cited by two fund clients as a reason for expanding their data subscription agreements.

Client Testimonial: “The provenance documentation Hir Infotech built into the pipeline is unlike anything we’ve seen from other vendors. Our funds’ compliance teams now use it as a benchmark for what good data sourcing looks like.” — Chief Data Officer, Alternative Data Provider, Frankfurt.

Client Background: A London-based SaaS company providing competitive intelligence software to 300+ B2B technology companies across the UK and Northern Europe. Their platform required continuous ingestion of product update announcements, pricing pages, and feature release notes from 5,000+ software vendor websites.

Challenge: The client’s existing scraping infrastructure suffered 23% data loss monthly due to site structure changes, anti-bot blocks, and JavaScript-rendered content that legacy scrapers couldn’t parse. Customer churn was rising as product intelligence gaps became visible to end users.

Solution: Hir Infotech migrated the client’s data acquisition layer to a fully managed AI scraping infrastructure with adaptive CSS selector recovery (automatically detects and corrects for site structure changes), headless browser rendering for JavaScript-heavy SaaS pricing pages, and a dedicated quality assurance layer that flagged anomalous data before it reached the client’s platform.

Results: Data loss rate dropped from 23% to under 1.2% within 30 days of migration. Coverage expanded from 5,000 to 7,400 software vendors without additional cost. Customer support tickets related to missing or stale intelligence dropped by 78%. The client reported a 19% reduction in churn rate in the following two quarters, directly attributable to improved data completeness.

Client Testimonial: “We’d tried three other scraping vendors before Hir Infotech. The difference in data reliability is extraordinary. Our customers finally trust the intelligence we provide, and our NPS has improved significantly since the migration.” — Head of Product, SaaS Market Intelligence Platform, London.

Client Background: A Sydney-based proptech company providing automated valuation models (AVM) and market analytics to 45 mortgage lenders and real estate agencies across Australia and New Zealand.

Challenge: The client needed daily extraction of 600,000+ property listings, recent sales data, and rental market updates across Domain, REA Group, and 12 state-level government property registries. Their AVM model accuracy was degrading because data ingestion delays meant models were running on 48-72 hour stale inputs.

Solution: Hir Infotech implemented a distributed scraping architecture designed specifically for the Australian property data ecosystem — including government registry data formats specific to NSW, VIC, QLD, and WA. Crawls were scheduled to complete and deliver structured data by 6:00 AM AEST daily, ensuring AVM models refreshed before market opening. Output integrated directly with the client’s AWS data lake via a custom API connector.

Results: Data staleness dropped from 48-72 hours to under 6 hours. AVM model accuracy improved by 8.3 percentage points, a material improvement for mortgage lenders using the platform for loan approval decisions. The client’s enterprise sales team cited improved data accuracy as the primary factor in closing three new lender contracts within six months of deployment.

Client Testimonial: “Our lender clients have strict requirements on data freshness. Hir Infotech built a pipeline that meets those requirements reliably, every single day. The team’s understanding of the Australian property data landscape was impressive.” — CTO, PropTech Platform, Sydney.

Client Background: A Paris-based healthcare market research company providing pharmaceutical manufacturers with drug pricing, formulary coverage, and competitive pipeline intelligence across the EU-27 market. Regulated under French data protection law (CNIL) and subject to GDPR Article 9 provisions for health-related data.

Challenge: The client’s research analysts were manually compiling drug pricing data from 14 national health authority websites across France, Germany, Italy, Spain, Netherlands, Belgium, and Austria — a process requiring 6 dedicated analysts working full time and still producing data with 2-week latency.

Solution: Hir Infotech designed a multilingual web scraping pipeline covering 14 EU health authority portals in French, German, Italian, Spanish, and Dutch — with NLP-based field extraction calibrated for health regulatory document formats. All pipelines included GDPR Article 9 safeguards and CNIL-compliant data handling procedures. Outputs were delivered as structured Excel and API feeds compatible with the client’s existing research platform.

Results: Manual analyst effort for data compilation reduced by 82%. Data latency dropped from 2 weeks to 48 hours. The research team could now cover 4x more pharmaceutical compounds per quarter with the same headcount. Client cited the multilingual NLP capability as a differentiator no other vendor had offered.

Client Testimonial: “For pharma intelligence across multiple EU markets, language has always been the barrier. Hir Infotech’s multilingual extraction capability gave us data quality in French, German, and Italian that we previously thought was only achievable through human translation.” — Director of Research, Healthcare Analytics, Paris.

Client Background: A Miami-based travel technology company providing revenue management software to 280 independent hotel groups across the USA, Spain, Italy, and Mexico. Their dynamic pricing engine required competitor rate data from 8 OTAs refreshed every 4 hours.

Challenge: Rate parity monitoring across 280 hotel clients, each tracking 15-25 competitor properties across Booking.com, Expedia, Hotels.com, and Airbnb, required 50,400+ data points every 4 hours — a volume that overwhelmed their existing infrastructure and resulted in 31% data gaps during peak traffic periods.

Solution: Hir Infotech deployed a high-concurrency scraping cluster specifically architected for OTA rate extraction — including Booking.com’s dynamic pricing modules, Expedia’s availability grids, and Airbnb’s multi-currency rate displays across 6 geographic markets. The pipeline handled 60,000+ data points per 4-hour cycle with redundancy and automatic retry on extraction failures.

Results: Data gap rate reduced from 31% to under 0.8%. The client’s revenue management software customers reported average RevPAR improvements of 6-11% in the first quarter post-deployment. The solution supported the client’s expansion from 280 hotel clients to 410 within eight months, with no additional scraping infrastructure investment required.

Client Testimonial: “The volume and reliability Hir Infotech delivers is genuinely exceptional. We’ve grown our client base by 46% and our data infrastructure costs haven’t increased proportionally at all. That’s the kind of partnership that changes a business.” — CEO, Travel Technology Company, Miami.

Client Background: An Amsterdam-based HR technology company providing workforce analytics and talent market intelligence to enterprise HR teams across the Netherlands, Germany, Belgium, and Denmark. Their platform tracked job posting trends, salary benchmarks, and skill demand signals.

Challenge: The client needed continuous extraction from 34 job boards across 4 countries in Dutch, German, French, and English — including platforms with aggressive anti-scraping protections (Stepstone DE, Jobbird NL, Vacature.com BE) — while maintaining GDPR compliance, as some job postings contained personal data elements.

Solution: Hir Infotech built a multilingual, GDPR-compliant job intelligence pipeline with purpose limitation safeguards — extracting structural job market data (titles, skills, compensation ranges, geographic distribution) while applying automated PII filtering to strip personal identifiers before data delivery. The pipeline covered 34 platforms across 4 countries with daily refresh and full audit trail documentation.

Results: Platform data coverage expanded from 18 to 34 job board sources. GDPR compliance documentation satisfied due diligence requirements from two enterprise clients in the financial services sector. The HR analytics platform launched a new salary benchmarking product feature directly enabled by the expanded data pipeline, generating €340,000 in new ARR within 12 months.

Client Testimonial: “Hir Infotech understood our compliance requirements immediately — they came to the first call with a GDPR framework for job data already prepared. That level of expertise in both data engineering and regulatory compliance is genuinely rare.” — Chief Product Officer, HR Technology, Amsterdam.

Client Background:

A mid-market B2B SaaS company headquartered in Austin, Texas, offering project management and workflow automation software. The company maintains a sales team of 45 representatives and manages an outbound pipeline targeting operations and IT leaders at companies with 200–2,000 employees.

Challenge:

The client’s CRM contained approximately 180,000 contact records accumulated over five years. Internal audits revealed that 38% of email addresses were bouncing, 24% of phone numbers were disconnected, and over 60% of records were missing firmographic fields like company revenue, employee count, and technology stack data. The SDR team was spending an average of 2.5 hours per day on manual data research, and campaign deliverability had declined significantly, triggering Google Workspace spam flags.

Solution:

Hir Infotech performed a full-scope data append project in three phases: (1) email address verification and re-appending using our AI match engine, (2) direct-dial phone number appending for all SDR-prioritised accounts, and (3) firmographic and technographic enrichment covering revenue bands, employee counts, SIC codes, CRM platform usage, and marketing automation stack for all 180,000 records.

Results:

Client Testimonial:

“Hir Infotech didn’t just clean our data — they fundamentally improved how our sales machine operates. The technographic append alone unlocked a targeting layer we didn’t know we were missing. Our SDRs are faster, our campaigns are cleaner, and the ROI showed up in the first 90 days.”

— VP of Revenue Operations, SaaS Platform, Austin TX

Client Background: A Frankfurt-based alternative data provider supplying structured datasets to 40+ asset management and hedge fund clients across the EU. Regulated under BaFin and required to document all data sourcing for client compliance audits.

Challenge: The client needed to scale their web data acquisition from 120 monitored sources to 800+ without proportionally scaling their internal engineering team. Critically, every dataset needed full provenance documentation — source URL, extraction timestamp, lawful basis assessment, and retention policy — to satisfy fund clients’ regulatory reporting requirements under MiFID II.

Solution: Hir Infotech built a white-label data acquisition platform with full provenance logging embedded in the pipeline architecture. Each dataset delivered included a machine-readable compliance manifest detailing source, timestamp, extraction method, and regulatory assessment. The system was EU-hosted with German data residency, satisfying BaFin and GDPR requirements. 847 sources were onboarded within 45 days.

Results: The client scaled from 120 to 847 monitored sources with zero increase in internal engineering headcount. Audit response time for fund client data requests dropped from 3 days to 4 hours. The compliance manifest system was specifically cited by two fund clients as a reason for expanding their data subscription agreements.

Client Testimonial: “The provenance documentation Hir Infotech built into the pipeline is unlike anything we’ve seen from other vendors. Our funds’ compliance teams now use it as a benchmark for what good data sourcing looks like.” — Chief Data Officer, Alternative Data Provider, Frankfurt.

Client Background: A London-based SaaS company providing competitive intelligence software to 300+ B2B technology companies across the UK and Northern Europe. Their platform required continuous ingestion of product update announcements, pricing pages, and feature release notes from 5,000+ software vendor websites.

Challenge: The client’s existing scraping infrastructure suffered 23% data loss monthly due to site structure changes, anti-bot blocks, and JavaScript-rendered content that legacy scrapers couldn’t parse. Customer churn was rising as product intelligence gaps became visible to end users.

Solution: Hir Infotech migrated the client’s data acquisition layer to a fully managed AI scraping infrastructure with adaptive CSS selector recovery (automatically detects and corrects for site structure changes), headless browser rendering for JavaScript-heavy SaaS pricing pages, and a dedicated quality assurance layer that flagged anomalous data before it reached the client’s platform.

Results: Data loss rate dropped from 23% to under 1.2% within 30 days of migration. Coverage expanded from 5,000 to 7,400 software vendors without additional cost. Customer support tickets related to missing or stale intelligence dropped by 78%. The client reported a 19% reduction in churn rate in the following two quarters, directly attributable to improved data completeness.

Client Testimonial: “We’d tried three other scraping vendors before Hir Infotech. The difference in data reliability is extraordinary. Our customers finally trust the intelligence we provide, and our NPS has improved significantly since the migration.” — Head of Product, SaaS Market Intelligence Platform, London.

Client Background: A Sydney-based proptech company providing automated valuation models (AVM) and market analytics to 45 mortgage lenders and real estate agencies across Australia and New Zealand.

Challenge: The client needed daily extraction of 600,000+ property listings, recent sales data, and rental market updates across Domain, REA Group, and 12 state-level government property registries. Their AVM model accuracy was degrading because data ingestion delays meant models were running on 48-72 hour stale inputs.

Solution: Hir Infotech implemented a distributed scraping architecture designed specifically for the Australian property data ecosystem — including government registry data formats specific to NSW, VIC, QLD, and WA. Crawls were scheduled to complete and deliver structured data by 6:00 AM AEST daily, ensuring AVM models refreshed before market opening. Output integrated directly with the client’s AWS data lake via a custom API connector.

Results: Data staleness dropped from 48-72 hours to under 6 hours. AVM model accuracy improved by 8.3 percentage points, a material improvement for mortgage lenders using the platform for loan approval decisions. The client’s enterprise sales team cited improved data accuracy as the primary factor in closing three new lender contracts within six months of deployment.

Client Testimonial: “Our lender clients have strict requirements on data freshness. Hir Infotech built a pipeline that meets those requirements reliably, every single day. The team’s understanding of the Australian property data landscape was impressive.” — CTO, PropTech Platform, Sydney.

Client Background: A Paris-based healthcare market research company providing pharmaceutical manufacturers with drug pricing, formulary coverage, and competitive pipeline intelligence across the EU-27 market. Regulated under French data protection law (CNIL) and subject to GDPR Article 9 provisions for health-related data.

Challenge: The client’s research analysts were manually compiling drug pricing data from 14 national health authority websites across France, Germany, Italy, Spain, Netherlands, Belgium, and Austria — a process requiring 6 dedicated analysts working full time and still producing data with 2-week latency.

Solution: Hir Infotech designed a multilingual web scraping pipeline covering 14 EU health authority portals in French, German, Italian, Spanish, and Dutch — with NLP-based field extraction calibrated for health regulatory document formats. All pipelines included GDPR Article 9 safeguards and CNIL-compliant data handling procedures. Outputs were delivered as structured Excel and API feeds compatible with the client’s existing research platform.

Results: Manual analyst effort for data compilation reduced by 82%. Data latency dropped from 2 weeks to 48 hours. The research team could now cover 4x more pharmaceutical compounds per quarter with the same headcount. Client cited the multilingual NLP capability as a differentiator no other vendor had offered.

Client Testimonial: “For pharma intelligence across multiple EU markets, language has always been the barrier. Hir Infotech’s multilingual extraction capability gave us data quality in French, German, and Italian that we previously thought was only achievable through human translation.” — Director of Research, Healthcare Analytics, Paris.

Client Background: A Miami-based travel technology company providing revenue management software to 280 independent hotel groups across the USA, Spain, Italy, and Mexico. Their dynamic pricing engine required competitor rate data from 8 OTAs refreshed every 4 hours.

Challenge: Rate parity monitoring across 280 hotel clients, each tracking 15-25 competitor properties across Booking.com, Expedia, Hotels.com, and Airbnb, required 50,400+ data points every 4 hours — a volume that overwhelmed their existing infrastructure and resulted in 31% data gaps during peak traffic periods.

Solution: Hir Infotech deployed a high-concurrency scraping cluster specifically architected for OTA rate extraction — including Booking.com’s dynamic pricing modules, Expedia’s availability grids, and Airbnb’s multi-currency rate displays across 6 geographic markets. The pipeline handled 60,000+ data points per 4-hour cycle with redundancy and automatic retry on extraction failures.

Results: Data gap rate reduced from 31% to under 0.8%. The client’s revenue management software customers reported average RevPAR improvements of 6-11% in the first quarter post-deployment. The solution supported the client’s expansion from 280 hotel clients to 410 within eight months, with no additional scraping infrastructure investment required.

Client Testimonial: “The volume and reliability Hir Infotech delivers is genuinely exceptional. We’ve grown our client base by 46% and our data infrastructure costs haven’t increased proportionally at all. That’s the kind of partnership that changes a business.” — CEO, Travel Technology Company, Miami.

Client Background: An Amsterdam-based HR technology company providing workforce analytics and talent market intelligence to enterprise HR teams across the Netherlands, Germany, Belgium, and Denmark. Their platform tracked job posting trends, salary benchmarks, and skill demand signals.

Challenge: The client needed continuous extraction from 34 job boards across 4 countries in Dutch, German, French, and English — including platforms with aggressive anti-scraping protections (Stepstone DE, Jobbird NL, Vacature.com BE) — while maintaining GDPR compliance, as some job postings contained personal data elements.

Solution: Hir Infotech built a multilingual, GDPR-compliant job intelligence pipeline with purpose limitation safeguards — extracting structural job market data (titles, skills, compensation ranges, geographic distribution) while applying automated PII filtering to strip personal identifiers before data delivery. The pipeline covered 34 platforms across 4 countries with daily refresh and full audit trail documentation.

Results: Platform data coverage expanded from 18 to 34 job board sources. GDPR compliance documentation satisfied due diligence requirements from two enterprise clients in the financial services sector. The HR analytics platform launched a new salary benchmarking product feature directly enabled by the expanded data pipeline, generating €340,000 in new ARR within 12 months.

Client Testimonial: “Hir Infotech understood our compliance requirements immediately — they came to the first call with a GDPR framework for job data already prepared. That level of expertise in both data engineering and regulatory compliance is genuinely rare.” — Chief Product Officer, HR Technology, Amsterdam.

Rely on Hir Infotech for 95%+ accurate data, meticulously verified to fuel your B2B success. Our global scraping solutions deliver trusted insights for confident decision-making worldwide.

With 12+ years of expertise, Hir Infotech has served 2745+ clients globally. Our proven scraping solutions drive B2B success across the USA, Europe, and Australia.

Rely on Hir Infotech for 95%+ accurate data, meticulously verified to fuel your B2B success. Our global scraping solutions deliver trusted insights for confident decision-making worldwide.

Unlock crucial business data by mastering website anti-scraping. Our 2026 guide covers proven strategies from IP rotation to headless browsers...

Gain a powerful edge in the 2026 auto market. Leverage automotive data scraping to master dynamic pricing, analyze competitor strategies,...

Unlock smarter investment decisions using real-time LinkedIn data on company growth, talent, and leadership. Gain a critical competitive edge and...

Gain a competitive edge with a powerful News API. This guide explains how it automates data extraction, providing real-time insights...

Unlock powerful aviation intelligence for your travel business. Our 2026 guide to flight data scraping reveals how to track competitor...

Instantly build a powerful recruitment platform by web scraping job boards for thousands of fresh listings. Attract top talent and...

Your competitors are already acting on data you haven’t collected yet. Don’t let intelligence gaps cost you market share, margin, or growth.

Hir Infotech — trusted by 2,745+ B2B clients across the USA, Europe, and Australia for 13+ years — is ready to build your first AI-powered web scraping pipeline and deliver a free sample dataset from your exact target sources within days.

Whether you need real-time competitive pricing, enriched lead data, market intelligence feeds, or regulatory monitoring — our team of senior data engineers, AI specialists, and compliance experts will design a solution tailored to your industry, geography, and data stack.

No commitment required. Production-quality data from your target sources, delivered in your preferred format — so you can validate quality before any commercial conversation.

Real-time data extraction delivers competitor pricing, product updates, and market movements within hours — enabling sales, marketing, and strategy teams to act on live market signals rather than stale weekly reports.

Replacing human analyst data gathering with automated pipelines reduces operational cost per data point by up to 90% — freeing teams to focus on insight generation, strategy, and decision-making rather than data collection.

Fully managed onboarding, pre-built compliance frameworks, and production-ready pipeline templates mean most enterprise clients receive their first structured data delivery within 5-7 business days — dramatically faster than internal build timelines.

AI-driven infrastructure scales from 10,000 to 100 million+ data points per day without architectural changes — ideal for enterprise clients with growing data appetites across multiple markets, products, and geographies.

Structured data delivery via API, JSON, CSV, XML, and direct connectors for Salesforce, HubSpot, Snowflake, BigQuery, Power BI, and custom enterprise data warehouses ensures no-friction adoption into existing workflows

NLP-enriched parsing, automated QA validation layers, and structured deduplication ensure that data delivered by Hir Infotech is consistently accurate, deduplicated, and ready for direct analytical use without manual preprocessing.

Hir Infotech’s engineering team proactively monitors crawl health, detects and adapts to site structure changes, resolves anti-bot challenges, and maintains data continuity — with no engineering involvement required from the client side.

Every pipeline is built with compliance-first architecture: lawful basis documentation, PII filtering, data minimization protocols, and audit trail generation — satisfying the requirements of legal teams, DPOs, and enterprise procurement processes.

Native language NLP extraction in English, German, French, Spanish, Italian, Dutch, and Swedish enables B2B companies in multilingual EU markets to access structured intelligence from domestic-language sources accurately and at scale.

13+ years of expertise, 2,745+ satisfied clients globally, and production deployments in 25+ industries across the USA, Europe, and Australia provide enterprise procurement teams with the verifiable track record required for mission-critical data partnerships.

At Hir Infotech, we offer flexible pricing models to power your data-driven success. Choose Subscription-Based Pricing for ongoing scraping needs with predictable costs, Pay-As-You-Go for one-off tasks billed by usage, Project-Based Flat Fees for tailored, end-to-end solutions, or Hourly Pricing for custom development and complex challenges. Whatever your budget or project scope, our expert team delivers cost-effective, high-quality web scraping solutions designed to fit your needs.

A one-time fee is charged for a specific project, regardless of volume or duration, based on scope and complexity.

Billed based on the time spent developing, running, or maintaining the scraper, often used for custom or consulting-heavy projects.

Charged based on actual usage, such as per request, per GB of bandwidth, or per page scraped, with no fixed commitment.

pay a recurring fee (monthly or annually) for access to scraping services, often tiered based on usage limits like the number of requests, pages scraped, or data points extracted.

We begin by collaborating with you to define your data needs—be it for a one-time project, recurring insights, or custom solutions. Whether you opt for Pay-As-You-Go flexibility, a Project-Based Flat Fee, Hourly expertise, or a Subscription plan, we align our approach to your objectives.

Our team identifies the websites and data sources critical to your project. We analyze site structures, assess complexity (e.g., static vs. dynamic content), and plan the most efficient scraping strategy, ensuring compliance with public data access norms.

Using cutting-edge tools and custom-built scrapers, we extract data at scale. We tackle challenges like JavaScript-rendered pages or anti-scraping measures with techniques such as:

Raw data is parsed, cleaned, and structured into formats like CSV, JSON, or Excel. We remove duplicates, correct errors, and validate accuracy to ensure you receive reliable, ready-to-use datasets.

Depending on your pricing model, we deliver results how and when you need them:

We monitor site changes, adapt scrapers as needed, and provide support to keep your data flowing seamlessly. Subscription clients enjoy continuous updates, while Hourly clients benefit from hands-on refinements.

AI-driven web scraping uses machine learning models, natural language processing, and computer vision to extract, structure, and interpret data from websites intelligently — adapting to site changes, understanding semantic context, and handling dynamic JavaScript-rendered content that traditional rule-based scrapers cannot process. Unlike traditional scrapers that break when website structures change, AI-native scrapers self-correct, classify data automatically, and deliver NLP-enriched, analysis-ready outputs. For B2B enterprises, this means dramatically higher data reliability, less maintenance overhead, and significantly better data quality straight from the extraction pipeline.

Scraping publicly available data is generally considered lawful in most jurisdictions when conducted responsibly. However, legality depends on what data is collected, how it’s used, and which regulatory frameworks apply. Under GDPR (Europe), any extraction involving personal data requires a documented lawful basis, purpose limitation, and data minimization compliance. Under CCPA (California/USA), businesses must ensure downstream use does not violate consumer privacy rights. Hir Infotech conducts a domain risk assessment on every project — evaluating robots.txt, platform Terms of Service, and applicable data protection regulations — and provides full compliance documentation. This pre-project legal framework is standard practice on every engagement.

Hir Infotech delivers structured web data in all major enterprise formats: JSON, CSV, XML, Excel, and via REST API with custom schema design. Our delivery infrastructure integrates natively with Salesforce, HubSpot, Snowflake, Google BigQuery, Microsoft Azure Data Factory, Power BI, Looker, and custom data warehousing or BI environments. For clients requiring EU data residency (Germany, France, Netherlands, Austria), we offer EU-hosted processing with full data sovereignty documentation.

For standard use cases (e-commerce price monitoring, lead generation, job market intelligence, news aggregation), Hir Infotech can deliver a first production pipeline within 5-7 business days. Complex enterprise deployments involving multilingual extraction, high-volume concurrent crawling, or custom compliance architecture typically complete onboarding within 14-21 days. We offer a free sample dataset from your target sources before full commitment, so you can validate data quality and format fit before signing off on a production engagement.

Our AI scraping infrastructure includes enterprise-grade anti-detection technology: rotating residential and datacenter IP pools, CAPTCHA resolution, headless browser rendering, human-behavior simulation, dynamic fingerprint management, and adaptive rate-limiting. These systems are continuously updated as platform anti-bot technologies evolve. For particularly high-protection platforms, our team conducts a technical feasibility assessment before project commencement and designs specific extraction strategies that maintain compliance with platform terms while achieving the required data coverage.

Hir Infotech serves 25+ industries with production-grade AI web scraping deployments, including: e-commerce and retail, financial services and fintech, healthcare and pharmaceuticals, real estate and proptech, travel and hospitality, human resources and recruitment, SaaS and technology, media and publishing, manufacturing and supply chain, legal and regulatory intelligence, and government and public sector procurement. Our geographic coverage spans all major markets in the USA, UK, Germany, France, Italy, Spain, Netherlands, Austria, Sweden, Denmark, Switzerland, Iceland, and Australia.

Yes. A substantial proportion of enterprise-relevant websites — including SaaS pricing pages, financial platforms, e-commerce storefronts, and real estate portals — are built on JavaScript frameworks (React, Angular, Vue.js) that render content client-side, making them inaccessible to traditional scrapers. Hir Infotech’s headless browser infrastructure (built on Chromium-based rendering engines) fully executes JavaScript, waits for dynamic content to load, interacts with paginated and filter-based interfaces, and extracts data from the fully rendered DOM — achieving the same data coverage from a JavaScript-heavy site as from a static HTML page.

Our data quality architecture is multi-layered: (1) Intelligent extraction using NLP-trained field parsers reduces raw extraction errors at source. (2) Automated post-extraction QA validates field completeness, data type consistency, and value ranges against expected parameters. (3) Anomaly detection flags datasets where values deviate materially from historical baselines, triggering human review before delivery. (4) Deduplication algorithms identify and remove duplicate records introduced by multiple source appearances of the same entity. The result is a consistently 99.4%+ accuracy rate across client deliveries, validated through quarterly data quality audits on all active pipelines.

Hir Infotech operates on project-based and retainer pricing models tailored to enterprise scale. Pricing is structured around data volume (records per month), number of monitored sources, refresh frequency (hourly, daily, weekly), delivery format complexity, and compliance documentation requirements. We offer flexible month-to-month retainer agreements for ongoing intelligence feeds and fixed-project pricing for one-time data acquisition exercises. All engagements begin with a free sample data delivery from your target sources, enabling data quality and format validation before commercial commitment.

Integration is a first-class feature of Hir Infotech’s delivery architecture. Our structured data outputs are schema-designed to match your target system’s data model before delivery — whether that’s Salesforce lead objects, HubSpot contact properties, Snowflake table schemas, or BigQuery dataset structures. For clients using business intelligence platforms (Power BI, Tableau, Looker, Metabase), we provide pre-formatted datasets with consistent field naming and type conventions. Our technical team provides integration documentation and, for enterprise clients, dedicated onboarding support to ensure smooth pipeline connectivity with minimal internal engineering effort.

+91 99099 90610

+91 94096 28528

inquiry@hirinfotech.com

Services

Industries