Unlock crucial business data by mastering website anti-scraping. Our 2026 guide covers proven strategies from IP rotation to headless browsers...

Hir Infotech delivers enterprise-grade ETL (Extract, Transform, Load) services that unify fragmented data sources into clean, structured, analytics-ready pipelines — at the speed and scale modern business demands. With 13+ years of hands-on experience, 2,745+ satisfied clients across the USA, Europe, and Australia, we engineer ETL solutions that eliminate data silos, automate complex transformations, and fuel confident decision-making for mid-market and enterprise organizations. Whether you’re integrating legacy systems, cloud warehouses, SaaS platforms, or real-time data streams, Hir Infotech is the trusted ETL partner that turns data complexity into competitive clarity.

3,200+

Projects Delivered

99.7%

Data Accuracy

$7.63B

Market Growth

2,745+

Client Base

13+

Years of Expertise

In an era where global data creation is approaching 175 zettabytes, businesses that cannot efficiently extract, transform, and load their data are operating blind. ETL (Extract, Transform, Load) is the foundational process that moves raw data from disparate sources — CRMs, ERPs, APIs, databases, SaaS platforms, and web streams — into structured, analytics-ready formats your teams can actually trust and use. For B2B organizations across the USA, UK, Germany, France, Netherlands, Sweden, Switzerland, Australia, and beyond, the ability to make fast, data-backed decisions is no longer optional. It is a survival requirement. At Hir Infotech, we design, build, and manage custom ETL pipelines that integrate with your existing technology stack seamlessly. With 13+ years of experience and 2,745+ enterprise clients globally, our team delivers pipelines that are not just functional — they are built for scale, compliance, and speed. Our AI-augmented ETL processes reduce manual intervention, surface anomalies before they become business risks, and keep your data warehouse perpetually fresh and trustworthy.

Hir Infotech delivers complete ETL lifecycle management — from source profiling and pipeline architecture to deployment, monitoring, and self-healing maintenance — ensuring your data infrastructure runs without disruption.

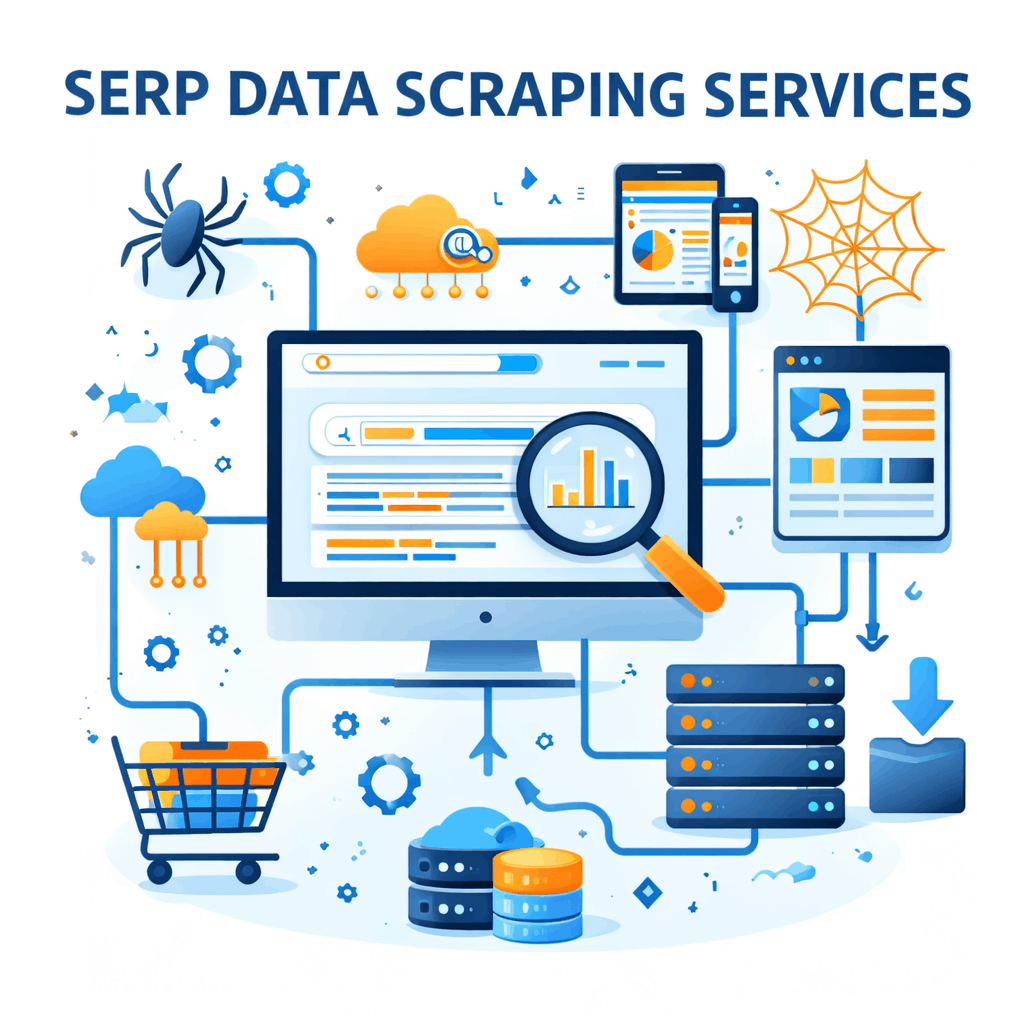

Our platform uses machine learning to automatically detect and map schema relationships across source systems, reducing integration time by up to 70% and adapting instantly when upstream data structures change — no manual reconfiguration required.

Hir Infotech’s ETL pipelines include autonomous anomaly detection and self-healing routines powered by AI. When a pipeline detects upstream schema drift, data quality failure, or throughput degradation, it auto-corrects or alerts your team — minimizing downtime and data debt.

We architect low-latency streaming pipelines using Apache Kafka, Spark Streaming, and AWS Kinesis that deliver sub-second data ingestion for use cases including fraud detection, live dashboards, IoT sensor feeds, and e-commerce inventory management.

Beyond loading to warehouses, we engineer Reverse ETL solutions that push enriched, analytics-ready data back into operational tools — Salesforce, HubSpot, Marketo, SAP, and custom CRMs — activating insights directly within your revenue and operations platforms.

Extract and transform customer interaction data from Salesforce, HubSpot, and Zoho CRM into a unified data warehouse. Enable sales teams across the USA and Europe to surface real-time pipeline analytics, forecast revenue accurately, and eliminate duplicated contact records with automated deduplication logic.

Consolidate order, inventory, returns, and customer behavior data from Shopify, Magento, WooCommerce, and Amazon Marketplace into a single analytics layer. Retail and e-commerce brands in the UK, Germany, and Australia use our ETL pipelines to generate real-time revenue dashboards and optimize marketing spend.

Extract transactional data from banking cores, payment gateways, and trading systems; transform it into regulatory-compliant formats for MiFID II (EU), SOX (USA), and PSD2 reporting. Our ETL pipelines for FinTech clients in Switzerland, the Netherlands, and the USA deliver audit-ready data trails at scale.

Merge patient records from Epic, Cerner, and legacy EMR systems into a unified analytics platform. Hir Infotech’s healthcare ETL pipelines in the USA, UK, and Germany support HIPAA and GDPR compliance while enabling population health analytics, clinical trial data management, and hospital performance reporting.

Extract and normalize logistics, procurement, and inventory data from SAP S/4HANA, Oracle ERP, and NetSuite into centralized data lakes. Manufacturing and logistics companies in Germany, France, and Australia use these pipelines to reduce inventory carrying costs and improve demand forecasting accuracy.

Aggregate campaign data from Google Ads, Meta Ads, LinkedIn, Marketo, and web analytics platforms into a single customer data model. B2B marketing teams in the USA, Sweden, and Denmark leverage these pipelines for multi-touch attribution, LTV modeling, and real-time campaign performance monitoring.

Extract property listing, pricing, and market trend data from MLS platforms, Rightmove (UK), Domain (Australia), and Immobilienscout24 (Germany) into a structured property intelligence warehouse. PropTech and real estate investment firms use this to power automated valuation models and market movement alerts.

Consolidate employee, payroll, performance, and recruitment data from HR platforms into a unified analytics environment. Enterprise HR teams across the USA, UK, and the Netherlands use Hir Infotech’s ETL pipelines to track workforce KPIs, reduce attrition risk, and align headcount with business growth.

Ingest and process high-velocity sensor data from IoT-enabled industrial equipment, smart buildings, and energy grids into real-time operational dashboards. Manufacturers in Austria, Sweden, and Iceland use our streaming ETL architecture to enable predictive maintenance and reduce equipment downtime by 30–45%.

Traditional, hand-coded ETL pipelines are brittle. They break when schemas change, require constant maintenance from scarce data engineering talent, and often deliver stale data that undermines the decisions they were built to support. In 2026, enterprises across the USA, UK, Germany, and France are shifting decisively toward AI-augmented ETL that adapts, self-heals, and scales without exponential resource investment. According to industry benchmarks, AI-driven ETL automation can reduce manual coding effort by 60–80% and cut pipeline maintenance costs significantly — a critical advantage in competitive markets where speed-to-insight drives revenue.

Hir Infotech’s AI-powered ETL services leverage machine learning for automated schema detection, NLP-based data classification, and intelligent deduplication — capabilities that traditional script-based ETL providers simply cannot match. Our pipelines process structured, semi-structured, and unstructured data from APIs, databases, flat files, cloud storage, and real-time streams. Across 3,200+ projects delivered to 2,745+ clients in the USA, Europe, and Australia, we have consistently achieved 99.7% data accuracy — giving enterprise decision-makers the confidence to act on their data without second-guessing its integrity.

Compliant by Design, Not as an Afterthought

For B2B companies operating across Europe and the USA, data compliance is as important as data quality. GDPR enforcement in the EU remains aggressive — with cumulative fines exceeding €5.88 billion since enforcement began — and CCPA compliance is now fully mandatory in the USA as of January 2026. Hir Infotech builds compliance into every layer of our ETL architecture: data minimization policies, AES-256 encryption in transit and at rest, role-based access controls, full audit trail logging, and data subject rights mechanisms are standard in every pipeline we deliver. Our European clients in Germany, France, Netherlands, Spain, Italy, Switzerland, Austria, Denmark, Sweden, and Iceland benefit from ETL infrastructure that keeps personal data processing within GDPR-defined boundaries while still enabling powerful analytics.

We align ETL pipeline governance with GDPR Articles 5 and 25 (data minimization and privacy by design), CCPA’s opt-out and deletion rights requirements, and sector-specific standards including HIPAA (healthcare, USA), PSD2 (financial services, EU), and SOX (publicly listed companies, USA). Our compliance-first ETL framework means your data engineering team spends less time on regulatory documentation and more time generating business value — while your legal and risk teams maintain full auditability of every data movement across the pipeline.

Client Background:

A mid-market B2B SaaS company based in Austin, Texas, with a 250-person sales team operating across North America and Western Europe. The company used Salesforce as its CRM, HubSpot for marketing automation, and Google BigQuery as its analytics warehouse.

Challenge:

Sales leadership was making pipeline forecasts from data that was 24–48 hours old due to nightly batch ETL jobs that frequently failed on schema changes. The marketing and sales data lived in separate silos, preventing cohesive pipeline-to-revenue reporting. Manual fixes by data engineers consumed 35% of their weekly sprint capacity.

Solution:

Hir Infotech redesigned the client’s ETL architecture from batch to real-time streaming using Apache Kafka and our AI-powered schema adaptation layer. We built unified pipelines that extracted live data from Salesforce, HubSpot, and Stripe, applied business-logic transformations (deal stage normalization, ARR calculations, multi-touch attribution), and loaded clean records into BigQuery with sub-5-minute latency. Our self-healing pipeline monitors flagged and resolved 98% of schema drift events without human intervention.

Results:

Client Testimonial:

“Hir Infotech didn’t just fix our ETL — they transformed how our entire revenue organization trusts data. The real-time pipeline was live in three weeks and hasn’t broken since. That’s not something we experienced with any previous vendor.”

— VP of Revenue Operations, B2B SaaS Company, Austin, TX

Client Background:

A FinTech startup headquartered in Berlin, Germany, offering embedded lending products to SMEs across Germany, Austria, and the Netherlands. The company processed loan application, credit scoring, and transaction data from three separate core banking APIs.

Challenge:

The company’s patchwork of manual Python scripts failed to enforce data minimization or maintain the audit logs required by GDPR and MiFID II. Data from three API sources used inconsistent field naming, currency formats, and date standards — making cross-country reporting nearly impossible. Regulatory audits were a recurring risk.

Solution:

Hir Infotech designed a compliance-first ETL pipeline that extracted data from all three banking APIs, applied standardized schema normalization with dynamic field mapping, and enforced GDPR data minimization at the transformation stage — removing personally identifiable information from analytics datasets and routing it through a separate, encrypted PII vault. Audit trail logging was embedded at every stage. Automated currency normalization and date standardization enabled unified reporting across Germany, Austria, and the Netherlands.

Results:

Client Testimonial:

“The compliance architecture Hir Infotech built gives us complete confidence in our regulatory reporting. We passed our most recent BaFin audit without a single data-related finding. That’s the value of building compliance in from the start.”

— Chief Data Officer, FinTech, Berlin, Germany

Client Background:

A multinational manufacturing company with operations in the UK, Australia, and Southeast Asia. The company ran SAP S/4HANA for ERP, a legacy warehouse management system, and three third-party logistics platforms operating in separate data environments.

Challenge:

Procurement, inventory, and logistics data lived in four incompatible systems. The company’s supply chain planning team relied on manually compiled Excel reports that took three days to produce — by which time the data was already outdated. Demand forecasting errors were costing the company an estimated £2.1M annually in excess inventory.

Solution:

Hir Infotech deployed a multi-source ETL pipeline that connected SAP S/4HANA, the WMS, and all three logistics APIs into a centralized Azure Data Lake. Custom transformation logic standardized SKU nomenclature, unit-of-measure conversions, supplier codes, and delivery lead times across all systems. A real-time inventory dashboard was built on Power BI, fed by micro-batch ETL jobs running every 15 minutes.

Results:

Client Testimonial:

“Before Hir Infotech, our supply chain data was always telling us yesterday’s story. Now we have a live view of inventory, logistics, and supplier performance that our planning team actually trusts and uses every day.”

— Global Head of Supply Chain Analytics, Manufacturing Group, London, UK

Client Background:

A regional hospital network operating 14 facilities across Texas and Florida, using a combination of Epic EMR, legacy HL7 data feeds, and a third-party patient billing platform. The network employed a 40-person analytics team but lacked a unified data environment.

Challenge:

Patient records, billing data, and clinical outcomes lived in four disconnected systems with incompatible schemas. The analytics team spent 70% of its time reconciling data manually rather than generating insights. Quality reporting for CMS value-based care programs was error-prone and delayed.

Solution:

Hir Infotech built a HIPAA-compliant ETL architecture that extracted HL7 FHIR-formatted clinical data from Epic, billing records from the third-party platform, and operational metrics from facility management systems. All PII was encrypted and handled under HIPAA-compliant protocols with strict access controls. Transformation logic standardized ICD-10 coding, patient ID resolution, and facility identifiers across all 14 sites. Data was loaded into a Snowflake warehouse with automated quality scoring at every pipeline stage.

Results:

Client Testimonial:

“Hir Infotech understood the unique compliance requirements of healthcare data from day one. The pipeline they built is the most reliable infrastructure our analytics team has ever worked with.”

— Director of Clinical Analytics, Hospital Network, Texas, USA

Client Background:

A 6,000-employee enterprise with HR operations managed across Workday (Netherlands HQ), SAP SuccessFactors (France division), and a legacy HRIS in Spain. The People Analytics team needed a unified view of workforce data to support a strategic workforce planning initiative.

Challenge:

Three incompatible HR platforms meant that headcount, attrition, compensation, and performance data could only be aligned through slow, error-prone manual exports. GDPR restrictions on personal data processing across EU jurisdictions added a further layer of complexity. The workforce planning project was stalled.

Solution:

Hir Infotech designed a GDPR-compliant HR ETL pipeline with data residency maintained within the EU throughout all extraction and transformation stages. We built transformation logic to normalize job grade taxonomies, currency (EUR/GBP/USD), date conventions, and organizational hierarchy across all three platforms. Anonymized aggregate datasets were loaded into the analytics warehouse, with a separate GDPR-controlled PII layer accessible only to authorized HR personnel.

Results:

Client Testimonial:

“Hir Infotech navigated the GDPR complexity across three countries that had been blocking us for months. The ETL solution they delivered is clean, compliant, and exactly what our People Analytics team needed to move forward.”

— Chief People Officer, Enterprise Group, Amsterdam, Netherlands

Client Background:

A third-party logistics (3PL) provider headquartered in Paris, France, serving mid-market and enterprise e-commerce brands across Western Europe. The company’s commercial team targets logistics managers, supply chain directors, and operations leads at companies with €10M+ annual revenue.

Challenge:

The commercial team’s contact database was built primarily from trade exhibitions and partner referrals, resulting in 110,000 records with extremely poor data completeness — only 31% had verified email addresses, 22% had direct phone numbers, and fewer than 15% had accurate firmographic data aligned to the company’s ICP. GDPR compliance across the European data set was also questionable, with no consent or legitimate-interest documentation on file for the majority of records.

Solution:

Hir Infotech executed a GDPR-aligned data appending programme including: (1) legitimate-interest lawful basis documentation for all European contacts, (2) email address appending with hard bounce guarantee, (3) direct-dial phone number appending, (4) firmographic enrichment covering revenue, employee count, and e-commerce platform usage, and (5) logistics-specific technographic appending covering WMS and TMS platform usage.

Results:

Client Testimonial:

“GDPR compliance was our first concern, and Hir Infotech addressed it immediately, professionally, and completely. The data quality was excellent — but it was their understanding of European compliance that made them stand out from every other vendor we evaluated.”

— Commercial Director, 3PL Company, Paris

Client Background:

A Swedish smart energy company managing IoT sensor networks across 12,000 commercial buildings in Sweden, Denmark, and Iceland. The company generated over 4 billion sensor data points per day from HVAC, lighting, and energy metering devices.

Challenge:

The existing batch ETL system introduced 6–12 hour data latency, making real-time energy optimization impossible. Predictive maintenance alerts were firing too late, resulting in equipment failures that cost the company an average of SEK 8M annually. The data engineering team lacked the expertise to architect a low-latency streaming solution at this scale.

Solution:

Hir Infotech designed a streaming ETL architecture using Apache Kafka for high-throughput ingestion, Apache Flink for real-time stream processing, and Azure Data Lake for long-term storage. Transformation logic applied anomaly detection ML models to sensor streams, flagging equipment degradation patterns 48–72 hours before failure. Processed data was delivered to operational dashboards with sub-30-second latency.

Results:

Client Testimonial:

“The real-time pipeline Hir Infotech built processes more data in an hour than our old system handled in a week — and it does it reliably, every minute of every day. Our clients are seeing energy savings that directly justify the investment.”

— CTO, Smart Energy Company, Stockholm, Sweden

Client Background:

A mid-market B2B SaaS company headquartered in Austin, Texas, offering project management and workflow automation software. The company maintains a sales team of 45 representatives and manages an outbound pipeline targeting operations and IT leaders at companies with 200–2,000 employees.

Challenge:

The client’s CRM contained approximately 180,000 contact records accumulated over five years. Internal audits revealed that 38% of email addresses were bouncing, 24% of phone numbers were disconnected, and over 60% of records were missing firmographic fields like company revenue, employee count, and technology stack data. The SDR team was spending an average of 2.5 hours per day on manual data research, and campaign deliverability had declined significantly, triggering Google Workspace spam flags.

Solution:

Hir Infotech performed a full-scope data append project in three phases: (1) email address verification and re-appending using our AI match engine, (2) direct-dial phone number appending for all SDR-prioritised accounts, and (3) firmographic and technographic enrichment covering revenue bands, employee counts, SIC codes, CRM platform usage, and marketing automation stack for all 180,000 records.

Results:

Client Testimonial:

“Hir Infotech didn’t just clean our data — they fundamentally improved how our sales machine operates. The technographic append alone unlocked a targeting layer we didn’t know we were missing. Our SDRs are faster, our campaigns are cleaner, and the ROI showed up in the first 90 days.”

— VP of Revenue Operations, SaaS Platform, Austin TX

Client Background:

A FinTech startup headquartered in Berlin, Germany, offering embedded lending products to SMEs across Germany, Austria, and the Netherlands. The company processed loan application, credit scoring, and transaction data from three separate core banking APIs.

Challenge:

The company’s patchwork of manual Python scripts failed to enforce data minimization or maintain the audit logs required by GDPR and MiFID II. Data from three API sources used inconsistent field naming, currency formats, and date standards — making cross-country reporting nearly impossible. Regulatory audits were a recurring risk.

Solution:

Hir Infotech designed a compliance-first ETL pipeline that extracted data from all three banking APIs, applied standardized schema normalization with dynamic field mapping, and enforced GDPR data minimization at the transformation stage — removing personally identifiable information from analytics datasets and routing it through a separate, encrypted PII vault. Audit trail logging was embedded at every stage. Automated currency normalization and date standardization enabled unified reporting across Germany, Austria, and the Netherlands.

Results:

Client Testimonial:

“The compliance architecture Hir Infotech built gives us complete confidence in our regulatory reporting. We passed our most recent BaFin audit without a single data-related finding. That’s the value of building compliance in from the start.”

— Chief Data Officer, FinTech, Berlin, Germany

Client Background:

A multinational manufacturing company with operations in the UK, Australia, and Southeast Asia. The company ran SAP S/4HANA for ERP, a legacy warehouse management system, and three third-party logistics platforms operating in separate data environments.

Challenge:

Procurement, inventory, and logistics data lived in four incompatible systems. The company’s supply chain planning team relied on manually compiled Excel reports that took three days to produce — by which time the data was already outdated. Demand forecasting errors were costing the company an estimated £2.1M annually in excess inventory.

Solution:

Hir Infotech deployed a multi-source ETL pipeline that connected SAP S/4HANA, the WMS, and all three logistics APIs into a centralized Azure Data Lake. Custom transformation logic standardized SKU nomenclature, unit-of-measure conversions, supplier codes, and delivery lead times across all systems. A real-time inventory dashboard was built on Power BI, fed by micro-batch ETL jobs running every 15 minutes.

Results:

Client Testimonial:

“Before Hir Infotech, our supply chain data was always telling us yesterday’s story. Now we have a live view of inventory, logistics, and supplier performance that our planning team actually trusts and uses every day.”

— Global Head of Supply Chain Analytics, Manufacturing Group, London, UK

Client Background:

A regional hospital network operating 14 facilities across Texas and Florida, using a combination of Epic EMR, legacy HL7 data feeds, and a third-party patient billing platform. The network employed a 40-person analytics team but lacked a unified data environment.

Challenge:

Patient records, billing data, and clinical outcomes lived in four disconnected systems with incompatible schemas. The analytics team spent 70% of its time reconciling data manually rather than generating insights. Quality reporting for CMS value-based care programs was error-prone and delayed.

Solution:

Hir Infotech built a HIPAA-compliant ETL architecture that extracted HL7 FHIR-formatted clinical data from Epic, billing records from the third-party platform, and operational metrics from facility management systems. All PII was encrypted and handled under HIPAA-compliant protocols with strict access controls. Transformation logic standardized ICD-10 coding, patient ID resolution, and facility identifiers across all 14 sites. Data was loaded into a Snowflake warehouse with automated quality scoring at every pipeline stage.

Results:

Client Testimonial:

“Hir Infotech understood the unique compliance requirements of healthcare data from day one. The pipeline they built is the most reliable infrastructure our analytics team has ever worked with.”

— Director of Clinical Analytics, Hospital Network, Texas, USA

Client Background:

A fast-growing Australian retail brand selling through Shopify, Amazon AU, eBay, and its own progressive web app. The company had $28M in annual revenue and a small data team of three analysts who were overwhelmed by fragmented reporting.

Challenge:

Each sales channel generated separate, incompatible data exports in different formats and update frequencies. Customer identity could not be resolved across channels, making LTV calculations unreliable. Marketing attribution was disconnected from actual sales outcomes, leading to significant wasted ad spend.

Solution:

Hir Infotech deployed a unified e-commerce ETL pipeline that ingested data from all four sales channels via API connectors and custom extractors, applied probabilistic customer identity resolution across channels, normalized order, return, and margin data into a single schema, and loaded it into a Google BigQuery warehouse. A marketing attribution transformation layer mapped Google Ads, Meta Ads, and email campaign data to actual channel-level revenue.

Results:

Client Testimonial:

“In three months, Hir Infotech gave our small data team the capabilities of a team three times its size. The pipeline just works — and the customer insights we’re getting are genuinely changing how we market and invest.”

— Head of Digital, Retail Brand, Sydney, Australia

Client Background:

A 6,000-employee enterprise with HR operations managed across Workday (Netherlands HQ), SAP SuccessFactors (France division), and a legacy HRIS in Spain. The People Analytics team needed a unified view of workforce data to support a strategic workforce planning initiative.

Challenge:

Three incompatible HR platforms meant that headcount, attrition, compensation, and performance data could only be aligned through slow, error-prone manual exports. GDPR restrictions on personal data processing across EU jurisdictions added a further layer of complexity. The workforce planning project was stalled.

Solution:

Hir Infotech designed a GDPR-compliant HR ETL pipeline with data residency maintained within the EU throughout all extraction and transformation stages. We built transformation logic to normalize job grade taxonomies, currency (EUR/GBP/USD), date conventions, and organizational hierarchy across all three platforms. Anonymized aggregate datasets were loaded into the analytics warehouse, with a separate GDPR-controlled PII layer accessible only to authorized HR personnel.

Results:

Client Testimonial:

“Hir Infotech navigated the GDPR complexity across three countries that had been blocking us for months. The ETL solution they delivered is clean, compliant, and exactly what our People Analytics team needed to move forward.”

— Chief People Officer, Enterprise Group, Amsterdam, Netherlands

Client Background:

A Swedish smart energy company managing IoT sensor networks across 12,000 commercial buildings in Sweden, Denmark, and Iceland. The company generated over 4 billion sensor data points per day from HVAC, lighting, and energy metering devices.

Challenge:

The existing batch ETL system introduced 6–12 hour data latency, making real-time energy optimization impossible. Predictive maintenance alerts were firing too late, resulting in equipment failures that cost the company an average of SEK 8M annually. The data engineering team lacked the expertise to architect a low-latency streaming solution at this scale.

Solution:

Hir Infotech designed a streaming ETL architecture using Apache Kafka for high-throughput ingestion, Apache Flink for real-time stream processing, and Azure Data Lake for long-term storage. Transformation logic applied anomaly detection ML models to sensor streams, flagging equipment degradation patterns 48–72 hours before failure. Processed data was delivered to operational dashboards with sub-30-second latency.

Results:

Client Testimonial:

“The real-time pipeline Hir Infotech built processes more data in an hour than our old system handled in a week — and it does it reliably, every minute of every day. Our clients are seeing energy savings that directly justify the investment.”

— CTO, Smart Energy Company, Stockholm, Sweden

Rely on Hir Infotech for 95%+ accurate data, meticulously verified to fuel your B2B success. Our global scraping solutions deliver trusted insights for confident decision-making worldwide.

With 12+ years of expertise, Hir Infotech has served 2745+ clients globally. Our proven scraping solutions drive B2B success across the USA, Europe, and Australia.

Rely on Hir Infotech for 95%+ accurate data, meticulously verified to fuel your B2B success. Our global scraping solutions deliver trusted insights for confident decision-making worldwide.

Unlock crucial business data by mastering website anti-scraping. Our 2026 guide covers proven strategies from IP rotation to headless browsers...

Gain a powerful edge in the 2026 auto market. Leverage automotive data scraping to master dynamic pricing, analyze competitor strategies,...

Unlock smarter investment decisions using real-time LinkedIn data on company growth, talent, and leadership. Gain a critical competitive edge and...

Gain a competitive edge with a powerful News API. This guide explains how it automates data extraction, providing real-time insights...

Unlock powerful aviation intelligence for your travel business. Our 2026 guide to flight data scraping reveals how to track competitor...

Instantly build a powerful recruitment platform by web scraping job boards for thousands of fresh listings. Attract top talent and...

Hir Infotech has spent 13+ years engineering ETL pipelines for 2,745+ enterprise and mid-market clients across the USA, Europe, and Australia. Our AI-powered ETL solutions are fast to deploy, compliant by design, and built to scale with your business — not against it. Request your free sample ETL pipeline scoping session today. Our data engineering team will analyze your sources, outline the right architecture, and show you exactly what your unified data environment can look like — with zero obligation.

Stop letting fragmented data slow your decisions. Hir Infotech delivers compliant, AI-driven ETL pipelines built for the speed, scale, and accuracy your business deserves — from Berlin to Boston, Sydney to Stockholm.

Eliminate data silos by consolidating all business data — CRM, ERP, marketing, finance, logistics, and HR — into one reliable, analytics-ready warehouse. Your teams make decisions from the same trusted dataset, eliminating conflicting reports and costly misalignments across departments.

Hir Infotech builds ETL connectors for 200+ source and destination systems — including Salesforce, SAP, Oracle, HubSpot, Workday, Snowflake, BigQuery, Redshift, Databricks, AWS S3, and all major cloud platforms. No rip-and-replace required.

Push analytics-enriched data back into Salesforce, HubSpot, Marketo, SAP, and custom applications via Reverse ETL, turning your data warehouse from a passive reporting layer into an active engine that drives sales, marketing, and operations in real time.

Hir Infotech’s AI-augmented pipelines automate schema detection, data cleansing, deduplication, and anomaly resolution, reducing manual data engineering effort by up to 70%. Your team focuses on building business value, not maintaining fragile scripts.

Our cloud-native ETL architectures scale dynamically with your data — from gigabytes to petabytes — without performance degradation. Whether you’re a 200-person scale-up or a 50,000-employee enterprise, our pipelines grow with your business seamlessly.

Move beyond overnight batch jobs. Our streaming ETL delivers fresh, accurate data to dashboards, ML models, and operational systems with sub-minute latency — enabling real-time fraud detection, live inventory management, and instant campaign performance visibility.

AI-powered monitoring continuously tracks pipeline health, auto-corrects common failure modes, and alerts your team to critical issues before they impact downstream analytics. Most schema changes are resolved without human intervention, maintaining SLA continuity.

Every pipeline is engineered with compliance-first principles: data minimization, encryption at rest and in transit, audit trail logging, data subject rights support, and role-based access controls aligned with GDPR (EU), CCPA (USA), HIPAA (healthcare), and PSD2 (financial services).

Our structured delivery methodology gets your first ETL pipeline live in as few as 2–4 weeks. Pre-built transformation templates for common business domains — finance, marketing, HR, e-commerce — accelerate deployment and reduce time-to-value dramatically.

Hir Infotech’s ETL clients report measurable results: 29–41% improvements in forecast accuracy, 60–75% reductions in manual analytics labor, and $480K–£1.4M in documented cost savings within 12 months. We are not a data vendor — we are a business outcomes partner.

At Hir Infotech, we offer flexible pricing models to power your data-driven success. Choose Subscription-Based Pricing for ongoing scraping needs with predictable costs, Pay-As-You-Go for one-off tasks billed by usage, Project-Based Flat Fees for tailored, end-to-end solutions, or Hourly Pricing for custom development and complex challenges. Whatever your budget or project scope, our expert team delivers cost-effective, high-quality web scraping solutions designed to fit your needs.

A one-time fee is charged for a specific project, regardless of volume or duration, based on scope and complexity.

Billed based on the time spent developing, running, or maintaining the scraper, often used for custom or consulting-heavy projects.

Charged based on actual usage, such as per request, per GB of bandwidth, or per page scraped, with no fixed commitment.

pay a recurring fee (monthly or annually) for access to scraping services, often tiered based on usage limits like the number of requests, pages scraped, or data points extracted.

We begin by collaborating with you to define your data needs—be it for a one-time project, recurring insights, or custom solutions. Whether you opt for Pay-As-You-Go flexibility, a Project-Based Flat Fee, Hourly expertise, or a Subscription plan, we align our approach to your objectives.

Our team identifies the websites and data sources critical to your project. We analyze site structures, assess complexity (e.g., static vs. dynamic content), and plan the most efficient scraping strategy, ensuring compliance with public data access norms.

Using cutting-edge tools and custom-built scrapers, we extract data at scale. We tackle challenges like JavaScript-rendered pages or anti-scraping measures with techniques such as:

Raw data is parsed, cleaned, and structured into formats like CSV, JSON, or Excel. We remove duplicates, correct errors, and validate accuracy to ensure you receive reliable, ready-to-use datasets.

Depending on your pricing model, we deliver results how and when you need them:

We monitor site changes, adapt scrapers as needed, and provide support to keep your data flowing seamlessly. Subscription clients enjoy continuous updates, while Hourly clients benefit from hands-on refinements.

ETL stands for Extract, Transform, Load — a structured, governed process that goes far beyond simple data movement. ETL extracts raw data from diverse sources (databases, APIs, flat files, cloud platforms), applies intelligent transformation logic (cleansing, normalization, deduplication, business rule application, schema alignment), and loads structurally consistent, high-quality data into a destination system such as a data warehouse, lake, or BI platform. Unlike raw data replication, ETL ensures that data is accurate, compliant, business-context-aware, and analytics-ready by the time it reaches its destination. For B2B enterprises, this distinction is critical: it is not enough to move data — it must be trustworthy.

Deployment timelines depend on the complexity of source systems, number of data sources, and transformation requirements. For straightforward pipelines connecting 2–4 systems with standard transformation logic, Hir Infotech typically delivers a production-ready pipeline within 2–4 weeks. Complex, multi-source enterprise ETL projects involving 10+ source systems, custom business logic, and compliance requirements typically take 6–12 weeks from scoping to live deployment. We use pre-built connector libraries and domain-specific transformation templates to accelerate delivery. All projects follow a structured delivery methodology with weekly progress checkpoints, staged testing environments, and documented handover.

GDPR compliance is embedded into every stage of our ETL architecture, not added as an afterthought. We implement data minimization at the extraction stage (collecting only necessary data), AES-256 encryption in transit and at rest, pseudonymization and anonymization for analytics datasets, role-based access controls, full audit trail logging for all data movements, and data subject rights mechanisms (access, correction, deletion). For clients in Germany, France, Netherlands, Spain, Italy, Austria, Sweden, Denmark, Switzerland, and Iceland, we maintain EU data residency throughout all pipeline stages. Our compliance frameworks are updated in line with EDPB guidance, including the most recent 2026 work programme and GDPR amendments.

Yes. Hir Infotech maintains pre-built, production-tested connectors for more than 200 source and destination systems including Salesforce Sales Cloud and Service Cloud, SAP S/4HANA, SAP SuccessFactors, Oracle ERP, NetSuite, Workday, HubSpot, Marketo, Microsoft Dynamics 365, Zendesk, Shopify, Magento, and all major cloud data warehouses (Snowflake, BigQuery, Amazon Redshift, Azure Synapse). For systems without standard connectors, our engineering team builds custom API-based or database-level connectors within project scope. Integration does not require decommissioning or replacing existing systems — our ETL layer works alongside your current stack.

Batch ETL processes and transfers data at scheduled intervals (hourly, daily, or weekly) — appropriate for financial period reporting, overnight data warehouse refreshes, and compliance reporting where near-real-time data is not critical. Real-time (streaming) ETL processes data continuously as it is generated, delivering sub-second to sub-minute latency — essential for fraud detection, live customer behavior tracking, IoT sensor monitoring, and dynamic pricing engines. Hir Infotech designs both architectures and often implements hybrid approaches: real-time streaming for operational data and high-frequency events, combined with batch pipelines for historical and reporting workloads. We assess your business requirements during scoping to recommend the appropriate architecture.

Traditional hand-coded ETL pipelines are static — they break when source schemas change, require ongoing manual maintenance, and cannot adapt to new data patterns. AI-augmented ETL, as deployed by Hir Infotech, introduces machine learning-driven schema detection that adapts to structural changes automatically, NLP-based data classification for unstructured content, intelligent deduplication that resolves identity conflicts across systems, predictive anomaly detection that flags data quality issues before they propagate, and self-healing logic that resolves common failures without human intervention. Industry benchmarks indicate that AI-driven ETL reduces manual pipeline maintenance effort by 60–80% and significantly improves data accuracy — directly translating to lower operational costs and higher data trustworthiness for enterprise analytics teams.

Data quality is enforced at multiple checkpoints throughout our ETL pipelines. At extraction, we apply source profiling to detect anomalies, missing values, duplicates, and format inconsistencies before transformation begins. During transformation, we apply configurable data quality rules aligned with your business definitions — including referential integrity checks, range validation, format standardization, and business-rule enforcement. At load, we run pre-load quality scoring to ensure only records meeting defined quality thresholds enter your destination system. Our pipelines maintain a consistent 99.7% data accuracy rate across production deployments. Failed records are quarantined in a separate exception queue with full lineage documentation for review and remediation.

Yes. Hir Infotech’s AI-powered ETL capability extends beyond structured databases and APIs to include unstructured and semi-structured data sources: PDF and Word documents, email threads, website content, social media feeds, JSON and XML APIs, HL7 clinical data feeds, and IoT sensor streams. We use natural language processing (NLP) and document intelligence models to extract structured entities and metadata from unstructured sources and incorporate them into your data pipeline alongside traditional structured data. This is particularly valuable for industries including healthcare (clinical notes), legal (contract analysis), financial services (regulatory documents), and e-commerce (product catalog enrichment).

Every ETL pipeline delivered by Hir Infotech includes a purpose-built operations dashboard providing full visibility into pipeline health, data throughput, transformation success rates, error queues, latency metrics, and compliance audit logs. Automated alerting notifies your engineering or data operations team via email, Slack, PagerDuty, or your preferred incident management tool when pipelines experience errors, latency spikes, or data quality failures. All pipeline activity is logged to an immutable audit trail, meeting GDPR and SOX documentation requirements. For enterprise clients, we offer managed monitoring services with defined SLAs and escalation paths as part of our ongoing support packages.

Building ETL infrastructure in-house requires experienced data engineers (median salary $130,000–$160,000 USD in the USA; €80,000–€110,000 in Western Europe), significant time investment (typically 3–9 months for a production-ready multi-source pipeline), ongoing maintenance as source systems evolve, and dedicated tooling and infrastructure costs. Hir Infotech’s managed ETL services deliver production pipelines faster, at a lower total cost, with built-in compliance, monitoring, and support — without the hiring risk or knowledge dependency. Our clients consistently document ROI within 6–12 months through reduced analytics labor costs (typically 60–75% reduction in data wrangling effort), improved decision accuracy, and direct cost savings identified through newly unified data.

+91 99099 90610

+91 94096 28528

inquiry@hirinfotech.com